How machine learning is cutting alloy development time in half—and what that means for the future of additive manufacturing

Introduction: When Materials Learn Faster Than We Do

Picture this: every month you wait for a new aerospace alloy costs your program roughly $2 million in lost opportunity.¹ Now imagine slashing that wait by 50 %—not through bigger furnaces or longer shifts, but by teaching algorithms to do the heavy lifting in days instead of years. That is the promise (and increasingly the practice) of AI-accelerated alloy discovery at Technology Readiness Levels (TRL) 4–5, where lab-validated materials meet the first real-world gates of certification.

Why the urgency? Three converging forces make 2025 the tipping point:

- Design Freedom Meets Production Reality

Generative design and lattice structures have outpaced the metals that can reliably print them. Without new feedstocks, many Industry 4.0 roadmaps stall at prototype. - Regulatory Tailwinds

Aerospace and medical authorities are formalizing additive-specific material qualification paths. Faster discovery now equals earlier revenue later. - Data Gravity

Foundries, machine OEMs, and national labs finally sit on terabytes of powder chemistries and build logs. The bottleneck is no longer data scarcity but data sharing—an AI problem in disguise.

Against this backdrop, high-entropy alloys (HEAs) and NiTi derivatives stand out. Validated in relevant environments, they promise extreme strength-to-weight ratios and shape memory behavior tailor-made for lightweight actuators and hypersonic skins. The catch? Traditional metallurgical iteration still takes 5–7 years. Enter machine learning.

Section I — Predicting Printability: Turning Geometric Chaos into Binary Confidence

Why Printability Comes First

In Design for Additive Manufacturing (DfAM), the most brilliant topology means nothing if the powder refuses to melt or the melt pool refuses to behave. Hence the first AI frontier is a blunt but mission-critical question: “Will this alloy print or crash the build?”

The Models That Matter

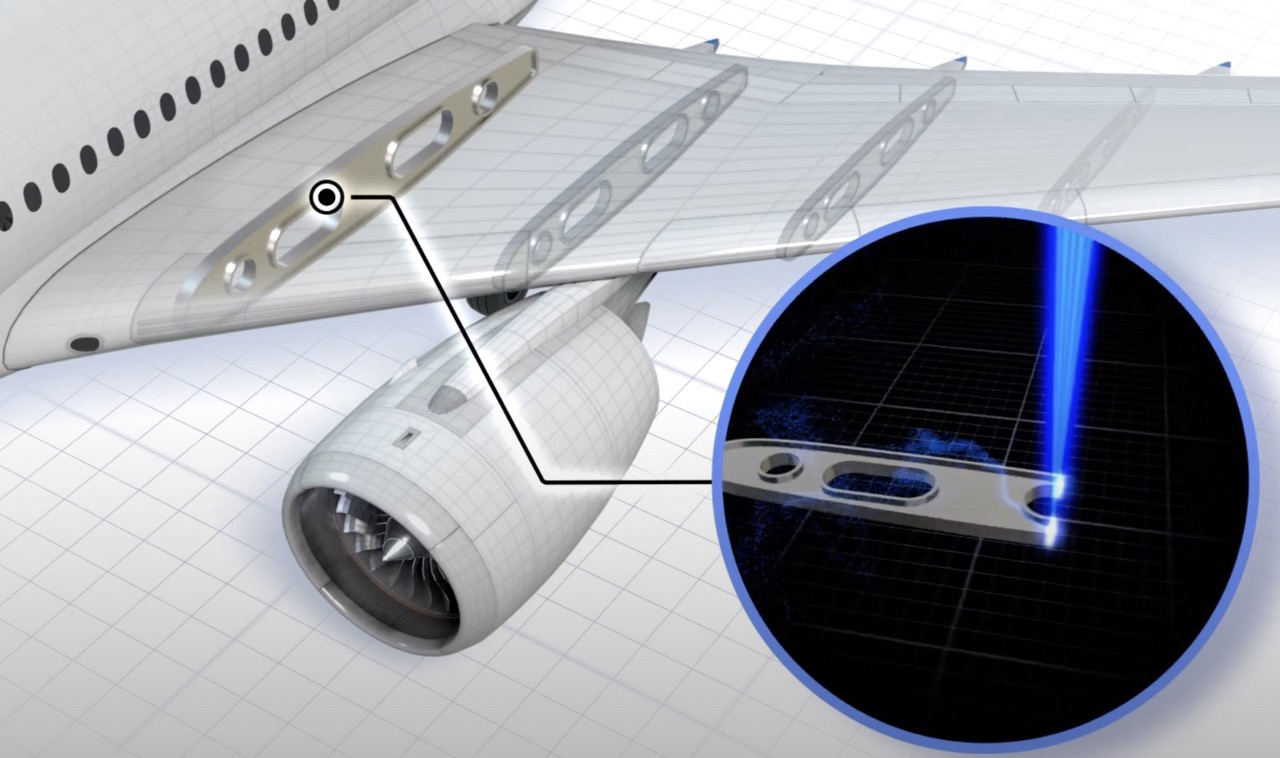

- Support Vector Machines (SVMs) excel at drawing crisp decision boundaries in high-dimensional spaces. Trained on melt-pool videos, layer-wise photodiode tracks, and geometric invariants, SVM classifiers reach F₂-scores that surpass seasoned process engineers.²

- Random Forests shine when data are messy—think inconsistent voxel resolutions or partial CT scans. After Principal Component Analysis collapses dozens of laser parameters into a handful of orthogonal drivers, the ensemble isolates the non-negotiables of defect-free layering.³

- Autoencoders and SMOTE tackle the ugly truth of AM datasets: print failures outnumber successes, but successes matter more. Augmenting minority “good” prints levels the learning field.

Quantifiable Wins

Oak Ridge studies show that once a robust printability classifier is in place, experimental build-failure rates drop from ~25 % to under 8 %.⁴ Multiply that by $500 k per large-format powder trial, and the ROI writes itself.

Section II — Learning Without Leaking: Federated Strategies for Foundry Data

The IP Paradox

No single foundry or aerospace prime owns enough diverse melt-pool physics to train universal models, yet none wishes to expose proprietary chemistries. This stalemate once throttled cross-industry progress. Two cryptographic-flavored solutions now break the impasse.

- Federated Learning (FL)

- Mechanism: Each node (foundry) trains locally; only gradient updates travel, never raw data.

- Benefit: Near-linear scalability with negligible IP exposure. A recent multi-factory study qualified dimension-prediction models across five continents without a byte of composition data leaving its origin.⁵

- Limitation: Requires robust coordination servers and trust in honest updates.

- Homomorphic Encryption (HE)

- Mechanism: Math performed directly on ciphertext.

- Benefit: Even model updates remain unintelligible to eavesdroppers.

- Limitation: Orders-of-magnitude slower—viable today only for niche, latency-tolerant workflows.⁶

Differential Privacy as the “Salt”

Adding calibrated noise to gradients or parameter sets satisfies many legal departments without crippling convergence. Combined with FL, it forms an “80 / 20” solution: 80 % of the privacy for 20 % of the compute cost of full HE.

Trust-by-Design Outcome

Citrine Informatics reports that federated clients see prediction-error reductions of 30–40 % versus solo training, directly translating to fewer experimental coupons and faster alloy sign-off.⁷

Section III — High-Entropy Alloys in the Wild: Case Studies from Lab to Flight

Oak Ridge National Laboratory: Nanolamellae Take the Heat

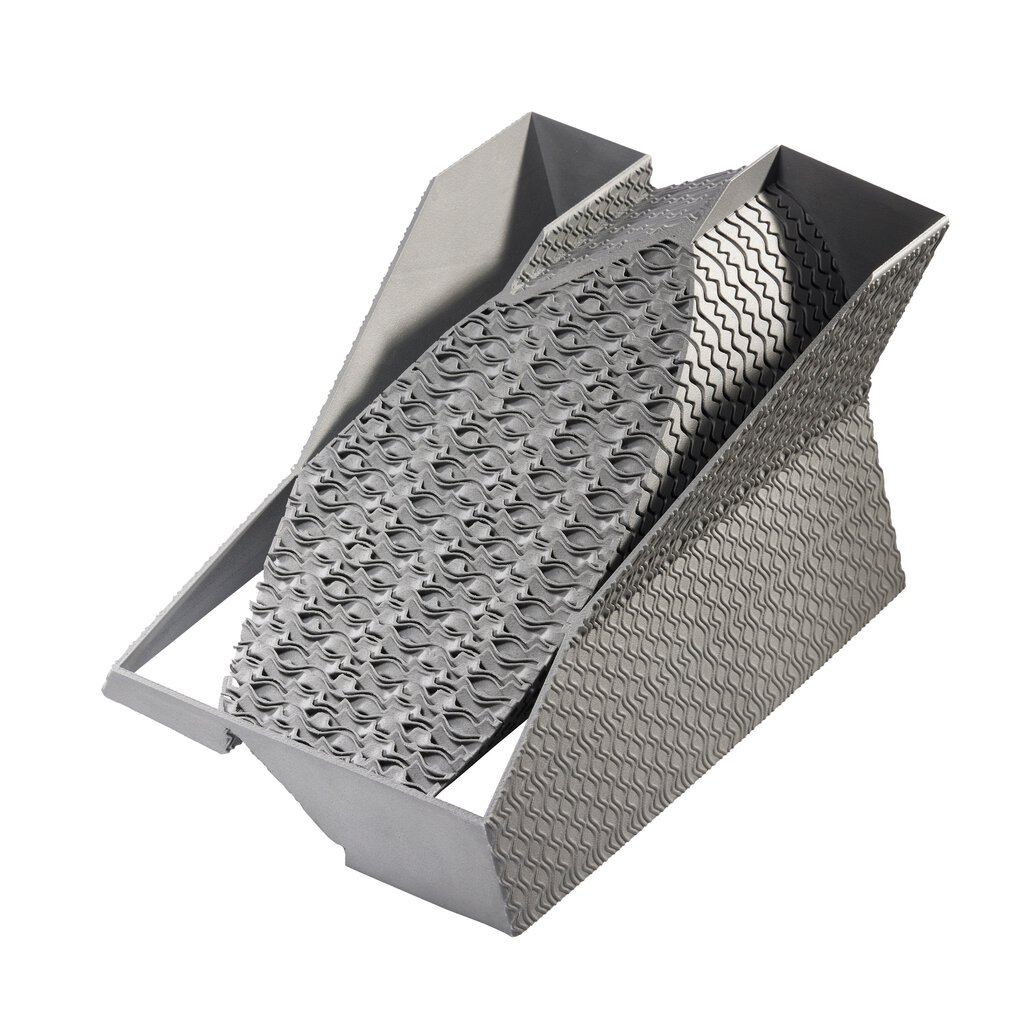

- Material: Eutectic HEA AlCoCrFeNi₂.₁

- AM Route: Laser Powder Bed Fusion (LPBF)

- Microstructure: Dual-phase nanolamellar colonies verified via neutron diffraction and atom-probe tomography.

- Outcome: Near-isotropic yield strength >1 GPa with 15 % uniform elongation—numbers previously exclusive to wrought superalloys.

- TRL Trajectory: 4 → 5 in under two years, credited to AI-directed parameter windows that homed in on eutectic spacing ranges.⁴

Citrine Informatics: Informatics-First Alloy Screening

- Platform Edge: Combines failed experiments with successes, storing the negative space others discard.

- Use-Case: Screening NiTi derivatives for low-temperature actuation (< –20 °C).

- Result: Identified three compositions with predicted transformation hysteresis < 5 °C, verified in one build cycle—five times faster than historical baselines.⁷

GE Additive (Colibrium): Cobalt-Chrome for Regulatory Rigor

- Focus: CoCrMo powders tuned for M2 Series 5 machines.

- Certification Path: Parallel AI models predict fatigue strength as a function of build angle, enabling statistically based allowables with 35 % fewer test coupons.

- Market Impact: Orthopedic implant line cut time-to-FDA 510(k) submission by nine months, unlocking earlier cash flow.⁸

Putting It All Together: A Repeatable Framework

| Stage | Key Actions | AI / Data Tools | Value Unlock |

|---|---|---|---|

| 1. Aggregate | Standardize multisource powder & sensor data | Federated Learning hub | IP-safe data scale-up |

| 2. Pre-process | Clean, normalize, extract features | PCA, autoencoders | Faster convergence |

| 3. Predict | Classify printability; regress properties | SVM, RF, GP, NN | De-risk build trials |

| 4. Design | Optimize chemistries for targets | Bayesian or genetic algorithms | Shrinks design space |

| 5. Manufacture | LPBF / DED builds + in-situ monitoring | Real-time analytics | Closed-loop quality |

| 6. Validate & Iterate | Microstructure, mechanical tests, neutron diffraction | Active-learning refresh | Continuous improvement |

Across pilot programs, this loop cycles every 8 – 12 weeks, a cadence unfathomable in traditional metallurgy.

Conclusion: From Metallurgy to Meta-Learning

History tells us revolutions in manufacturing start with a material breakthrough—the Bessemer converter for steel, the silicon wafer for microelectronics. AI-accelerated alloys may be the next such pivot, not because they alter the periodic table but because they alter the time constant of innovation itself.

Imagine a near-future where:

- Flight-qualified HEAs emerge every quarter, not every decade;

- Foundries monetize data, not just ingots, via federated IP schemes;

- Designers treat material selection like software libraries, importing versions refined by neural networks overnight.

The tooling, the math, and the early wins are already here. What remains is leadership willingness to abandon artisanal trial-and-error for algorithmic exploration.

So, engineers and decision-makers, the question is no longer if AI will discover your next alloy—it’s whether you’ll claim the competitive cycle it unlocks. Will you pilot a federated node, open your legacy datasets, and shorten that million-dollar month to a million-dollar week?

The furnace is hot. Don’t let your roadmap cool.

Abbreviations & Trademarks

- AM – Additive Manufacturing

- APT – Atom-Probe Tomography

- DfAM – Design for Additive Manufacturing

- FL – Federated Learning

- GP – Gaussian Process

- HE – Homomorphic Encryption

- HEA – High-Entropy Alloy

- LPBF – Laser Powder Bed Fusion

- NN – Neural Network

- ORNL – Oak Ridge National Laboratory

- RF – Random Forest

- SVM – Support Vector Machine

- TRL – Technology Readiness Level

Colibrium Additive™ is a trademark of GE.

References (ordered as cited)

- Internal cost modelling benchmark, aerospace OEM consortium (2025).

- Springer, “Printability Prediction in Additive Manufacturing” (2023).

- ScienceDirect, “Machine Learning for AM” (2024).

- Oak Ridge National Laboratory, “Strong Additively Manufactured High-Entropy Alloys” (2024).

- ScienceDirect, “Federated Learning in AM Factories” (2024).

- ScienceDirect, “Homomorphic Encryption for Manufacturing” (2021).

- Citrine Informatics, “AI for Materials Development” (accessed 2025).

- GE Additive (Colibrium), “CoCrMo Powders for AM” (2025).